Open Source and AI: Three European Regulations, Three Logics, One Ecosystem

The use of artificial intelligence (AI) raises major legal, ethical and societal challenges in Europe, to which legislators are attempting to respond through new European regulations. The European Union has adopted Regulation (EU) 2024/1689 on Artificial Intelligence (RIA) (hereinafter “RIA” or “AI Act”), which grants a derogatory regime to Open Source AI systems, but with limitations that reveal a narrow interpretation of what “Open Source” means in the context of AI.

Around the series of four articles “Open Source and AI”, inno3 proposes an analysis of the RIA, its impact on the Open Source ecosystem, and actionable paths for implementation.

In the first article “Open Source and AI: The Contributions of the European AI Act“, the aim is to provide key reference points on the place of Open Source in this Regulation around General Purpose AI models (GPAI) and AI systems.

In the second article “Open Source and AI: Three European Regulations, Three Logics, One Ecosystem”, the objective is to reposition the RIA in a broader context of a set of European regulations (NPLD, CRA, etc.) and to analyze their impacts on the European Open Source ecosystem and its international dynamics.

In the final article “Open Source and AI: What Are We Really Talking About?“, the aim is firstly to revisit the different definitions at work for an “open” AI, their points of divergence and secondly to propose actionable paths for implementation.

Key Takeaways

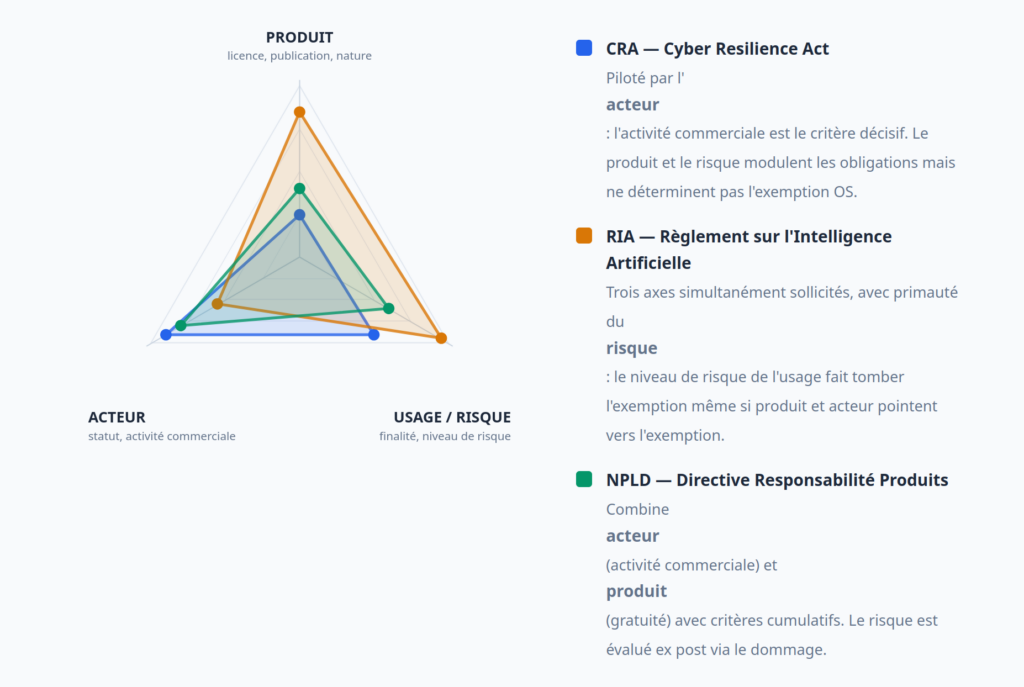

- An open source developer or maintainer faces simultaneously three European texts (CRA, RIA, NPLD) whose exemptions are not aligned. The CRA reasons in terms of commercial activity, the RIA in terms of license and publication, the NPLD in terms of free provision.

- The same actor can be exempted by one and subject to another.

- Internationally, the USA, China and Europe converge towards favorable treatment of openness, but for radically different reasons — national security (USA), digital hegemony (China), fundamental rights (Europe).

The free software and Open Source ecosystem finds itself at the heart of a bundle of convergent regulations: the Regulation on Cybersecurity Resilience (CRA, 2024), the AI Regulation (2024), and the Directive on Liability for Defective Products (NPLD, 2024), to mention only the most recent European standards.

If the main idea of preserving “open innovation” is clear, each of these texts proposes exemptions for Open Source based on different criteria. The CRA speaks of “commercial activity”. The RIA speaks of “free license” and “publication”. The NPLD speaks of “free provision”.

For the same maintainer or contributor, the regulatory consequences diverge depending on the text considered. These three texts share a fundamental point of convergence: the regulation of placing on the market, which is a constitutive competence of European law. It is at the moment when a product (including software, an AI model) enters into European commercial circulation that the obligations are triggered.

However, the legislator was unable to simply exclude Open Source from this logic: Open Source does not prevent commercialization, and it represents considerable collective value. Each text therefore attempted to draw its own line between community exemption and commercial subjection. The problem is that these three lines do not overlap.

Placing on the Market: Point of Convergence of the Three European Regulations

The Union Regulates the Circulation of Goods

The common foundation of all three texts (CRA, RIA, NPLD) is the Union’s competence to regulate the circulation of goods in the internal market. This competence rests on Article 114 of the TFEU (Treaty on the Functioning of the European Union), which authorizes the Parliament and the Council to adopt measures for the approximation of national laws “intended to establish and ensure the functioning of the internal market”.

The three regulations fall within this framework: the CRA and the RIA explicitly cite Article 114 TFEU as their legal basis; the NPLD, as a directive, also relies on it to harmonize liability regimes. This is not insignificant: it means that these texts regulate above all the circulation of products (including software and AI models) in the single market.

The pivot concept is that of “placing on the market” and “making available on the market”. These concepts have a long tradition in European product law. They have been harmonized since Decision No 768/2008/EC (the “New Legislative Framework”) and explained in the Blue Guide on the implementation of European product regulations revised in 2022.

The Blue Guide recalls that “placing on the market” designates the first act of placing a product on the market of the Union, and that “making available” covers any supply for distribution or use, in the context of a commercial activity, whether carried out for payment or free of charge.

It is this formulation (used well before the emergence of AI texts) that the three regulations take up and adapt.

The RIA provides the most explicit application for AI. Article 3, point (9), defines “placing on the market” as “the first making available of an AI system or a general purpose AI model on the market of the Union”. Article 3, point (10), clarifies “making available on the market”: the supply of an AI system or a general purpose AI model intended for distribution or use on the market of the Union, in the context of a commercial activity, whether carried out for payment or free of charge. Finally, Article 3, point (11), defines “putting into service”: the supply for first direct use to the deployer.

The CRA adopts materially identical definitions (Article 3, points 20 to 22), as does the NPLD in its conception of the “manufacturer” who “makes a product available”.

What strikes in the common formulation: it explicitly includes free supplies if they fall within a commercial activity. The Union did not create a simple exemption for Open Source; rather, it developed a complex condition that recalls the great principle according to which “Free does not mean free of charge”.

The other contribution, which is a source of anxiety for many, is that these texts aim to provide greater transparency in the digital sphere, in particular to trace a non-compliant product back to the manufacturer. This traceability (and underlying liability) creates tension in the Open Source ecosystem, where the notion of “manufacturer” is fundamentally distributed, often voluntary and always “as is/without warranty”.

Thus, the entire work of the legislator has been, for each text, to clarify 1) what is meant by free software and/or Open Source and 2) when Open Source shifts from exemption to subjection:

- The CRA (Article 4) exempts products developed or provided “outside of a commercial activity”. Commercial activity is the cursor: as soon as it exists, the exemption ceases. Nevertheless, the articulation with Open Source is not clarified and recital 18 specifies that “the mere fact of publishing software on a public repository does not in itself constitute a commercial activity”, which reassures volunteer developers but leaves many gray areas: sponsorship, indirect remuneration, integration into a commercial service — are these decisive? The CRA also establishes the status of “open-source software steward” (Article 3, point 14), an intermediate path for foundations that systematically support free projects and are “partners” of regulatory institutions.

- The RIA (Articles 2.12 and 53.2) reasons in terms of license and publication, not commercial activity. A high-risk AI system is excluded if it is provided “under an appropriate free license” and “with components or models published under a free license”. An open source GPAI model benefits from a lightening of the burden. But the RIA adds an implicit condition: the non-monetized character. Recital 103 of the RIA clarifies that “AI systems [free] developed without commercial purpose [are not subject to most of the obligations]”. Thus, the exemption rests nominally on form (free license) but materially on the absence of monetization. This tension between the provision (articles) and the interpretation (recitals) can nevertheless be resolved if we consider once again that the main criterion remains that of placing on the market.

- The NPLD (recital 20) combines two criteria: the supplier who makes available free software “free of charge and outside of a commercial activity” is not a responsible manufacturer. This is the approach that seems the most stringent, cumulatively requiring free provision AND the absence of commercial activity, but which, once again, seems capable of being harmonized with that of the other texts.

Depending on whether one adopts a common vision of these texts or not, the same maintainer or even project could be exempted by the CRA, subject to the RIA, and partially covered by the NPLD — depending on the facts.

Gray Areas: Between Formal Exemption and Market Reality

Several configurations remain undecided, some of which have already been discussed in the context of CRA analysis:

The salaried maintainer of a large tech company: a developer at Google, Meta or Microsoft, paid €150,000 per year, devotes 20% of their time to maintaining a Linux or PyTorch project. Their employer derives prestige, technical influence, talent attraction from it. Is there “commercial activity”?

The foundation that receives donations or sponsorships: some Open Source foundations receive donations and contributions that run into millions to maintain certain software directly or indirectly important to the commercial activity of those who fund it. Is there “commercial activity”? This is all the more debatable when one explores the different types of statuses that such foundations may use, some of which are very close to companies (which would pool developments for their members).

The open core or freemium model: a startup proposes an open source version of its platform + a paid enterprise version (additional features, support, infrastructure). Who is subject to the CRA, the RIA, the NPLD? The open source version, in isolation, technically falls under the exemption: free, free license, no direct monetization of this component. Guidelines relating to the CRA consider that one must apply “contractual situations by contractual situations”, which could pose difficulties in light of practices around Open Source licenses (which are only contractual offers, i.e., which form when persons accept the license, without necessarily being informed of it).

Dual-licensing: a publisher proposes a GPL version of the code and a paid proprietary license. Same question: does the exemption cover the isolated GPL version?

What one senses from the legislator is that it intends to subject all economic operators to the same rules (whether or not they are contributors to free and Open Source software), which has the advantage of equal treatment, but raises the question of equity: is it normal that companies that contribute massively to the European open digital infrastructure are subject to the same rules as digital giants who reuse their software? The answer is certainly in the realm of enforcement, but deserves to be formulated because resilience to legal action is not the same for everyone.

To this must be added the other actors who participate in the deployment of Open Source software (contributing or not), because even if an Open Source component can be exempted in one context, it will not be in another that becomes commercial. Thus, recital 19 of the CRA makes clear: “Producers who incorporate or modify FOSS products in their own products must comply with the requirements of this regulation”.

This creates a fragmentation of responsibility, potentially internalizing the cost of compliance among those who commercialize.

There are therefore several urgent matters:

- Clarify what is meant by free software and/or Open Source and by the concept of placing on the market. The texts exist, unfortunately the implementation in these three legislative texts demonstrates a need for harmonization;

- Ensure that this regulatory complexity is also accompanied by a system of protection for the volunteer Open Source ecosystem, which will only very painfully be able to defend itself before the courts in case of action (notably for the NPLD);

- Reflect on an extension of the concept of “open-source software steward” beyond the CRA.

The International Dimension and Additional Regulatory Layers

Unsurprisingly, Europe is not alone in regulating AIs — including Open Source AIs. The subject is thus addressed:

- in the United States (national security): open models that are not technically sensitive to accelerate innovation and maintain competitive superiority.

- in China (digital hegemony): publish broadly to become the indispensable technological base of the world.

- in Europe (fundamental rights): regulate open and closed AI to protect citizens, even at the risk of slowing innovation.

These three logics are consistent with their respective geopolitical contexts, but are not designed to articulate with each other.

Export Controls in the United States: The EAR AI Diffusion Framework

In January 2025, the Bureau of Industry and Security (BIS) of the U.S. Department of Commerce adopted an AI Diffusion Framework (ADF) that governs the export of AI model weights. The regime includes a technical threshold: models with more than 10^26 FLOP (computational units) are subject to export control. But the key exemption: weights made public (Open Source) escape control.

This is an inverse approach to Europe: instead of regulating openness, the United States incentivizes it by exempting it from export controls. The logic is clearly geopolitical (national security) and also results from the underlying extraterritorial mechanism (it is necessary to allow freedoms in order to then sanction with force what happens across the Atlantic).

The EAR aims to prevent technology transfers to adversaries (China, Iran, Russia). But openness (global publication) is an inevitable leak.

The implication is that a European maintainer contributing to a collaborative ML project with US actors must verify that the project is not subject to the EAR, knowing that a US Open Source model subject to the EAR but published globally escapes control (but remains subject to the RIA in Europe).

This entry of Models into the scope of the EAR (for dual-use goods) seems to be relatable to recent events that have opposed major AI platforms to the U.S. Department of War.

The European regulation on dual-use goods (EU 2021/821) also applies to AI technologies whose cryptographic components fall within controlled categories. This triple layer (US EAR, European RIA, European dual-use) constitutes a tangle that could be the subject of a dedicated article in this series.

China: The Strategy of Digital Hegemony

In 2023, China adopted Provisional Measures for the Management of Generative AI Services that provide for an R&D exemption and internal use. Since then, China has amplified a strategy of massive openness of models: DeepSeek, Qwen (Alibaba), and others have accumulated more than 700 million cumulative downloads in 2025-2026.

This is a deliberate strategy to “dominate through openness”. Chinese models, published freely, become the mental infrastructure of the global ecosystem. Developers, startups, academics from around the world train and refine Chinese models. It is a form of soft power that creates technological dependence in the long term.

In fact, a Chinese GPAI model published under an open license is subject to the RIA (at least at the integrator level), which can be all the more complex given the “political” obligations to which Chinese companies must comply (including in terms of model creation).

In Europe: Copyright, TDM and Training Data – A Regulatory Blind Spot

The training of AI models (including open source models) relies heavily on Text and Data Mining (TDM) of content protected by copyright. Directive (EU) 2019/790 on copyright in the digital single market provides two TDM exceptions: Article 3 (scientific research exception, with no opt-out possible) and Article 4 (commercial exception, subject to the right of opt-out of rights holders).

The RIA, in Articles 53(1)(c) and (d), requires any GPAI model provider (including open source) to implement a copyright compliance policy and to publish a summary of the training data used. This is one of the few obligations maintained even for GPAI models benefiting from the lightening of Article 53(2).

This intersection between copyright and AI regulation creates specific tensions for the open ecosystem. An Open Source model trained on data where opt-out has not been verified exposes itself to litigation risk under the copyright directive and to non-compliance under the RIA.

The question becomes more complex when the training data themselves come from open datasets (Common Crawl, LAION) whose TDM legality is disputed.

These questions, which mix copyright, data law and sectoral regulation, deserve in-depth treatment which could also be the subject of a later article in this series.

Conclusions

Open Source as a Terrain of Innovation: A Doctrine to Affirm

Faced with this regulatory complexity, it should be recalled that open source is not merely a legal object to be regulated, but also a terrain of innovation to be protected. The Open Source Manifesto published in 2025 by Numeum articulates a clear vision: Open Source is a lever of competitiveness, sovereignty and innovation for Europe, not simply a software distribution model.

The Manifesto identifies four priorities: integrating open source into all key technological transitions (AI, cloud, cybersecurity), embedding it in European standards and regulations to guarantee transparency and interoperability, supporting development communities, and making it a default component of public procurement.

This doctrine aligns with the work of the European Commission which, in its “European Open Digital Ecosystems” strategy under consultation in 2025-2026, envisages open source as a strategic asset in service of the Union’s digital autonomy.

The issue is that regulation must not contradict industrial policy. If Europe affirms on one hand that open source is a pillar of its digital sovereignty (as suggested by the Draghi report and DINUM initiatives), it cannot, on the other hand, impose a regulatory framework that weakens the very actors that constitute this ecosystem.

The CRA, the RIA and the NPLD must therefore be read not only as compliance texts, but also in light of this industrial and political ambition. By harmonizing obligations for all commercial actors without distinguishing the nature of their contribution, one risks weakening the very soil that one says one wishes to encourage. If contributing to an open source project exposes a company to the same obligations as developing a proprietary product, the economic incentive to contribute to the commons weakens. Companies could rationally prefer to consume open source (as integrators) rather than contribute to it (as maintainers or co-developers), since active contribution increases their compliance surface without regulatory benefit. This would be a result directly contrary to the ambition stated by the European Commission in its strategy on open digital ecosystems.

A Need for Clarification

The three European regulations converge on the same anchor point: placing on the market as the moment triggering obligations. But the legislator, faced with an object (open source software) that circulates freely, massively and often free of charge while producing considerable economic value, was unable to draw a single line. Each text forged its own criterion: commercial activity (CRA), license and openness (RIA), free provision combined with the absence of commercial activity (NPLD).

These three approaches overlap without matching. If Europe could neither ignore Open Source (too much value), nor exempt it completely (too much risk), nor treat it exactly like proprietary software (too much collateral damage), three workstreams seem to impose themselves:

- An administrative clarification: the AI Office and national authorities must publish consolidated guidelines that harmonize the interpretation of “commercial activity” across the three texts.

- Institutional innovation: extend the steward concept beyond the CRA, create a cross-cutting compliance passport, and above all recognize the specific role of companies contributing to digital commons as distinct from that of commercial publishers.

- A doctrinal ambition: Europe must affirm an explicit and coherent doctrine where the regulation of AI and the promotion of open source as a common good do not contradict each other.

Related links

Related articles

Article

Open and controlled: Open Source put to the test by export control in 2026

News

Regulation, sovereignty, competitiveness: evolving European regulation to embed Open Source and sovereignty

Article

An "AI Model Component" Note for Open Source Licenses

Article